Image generated with Google Gemini

Image generated with Google Gemini

The Problem

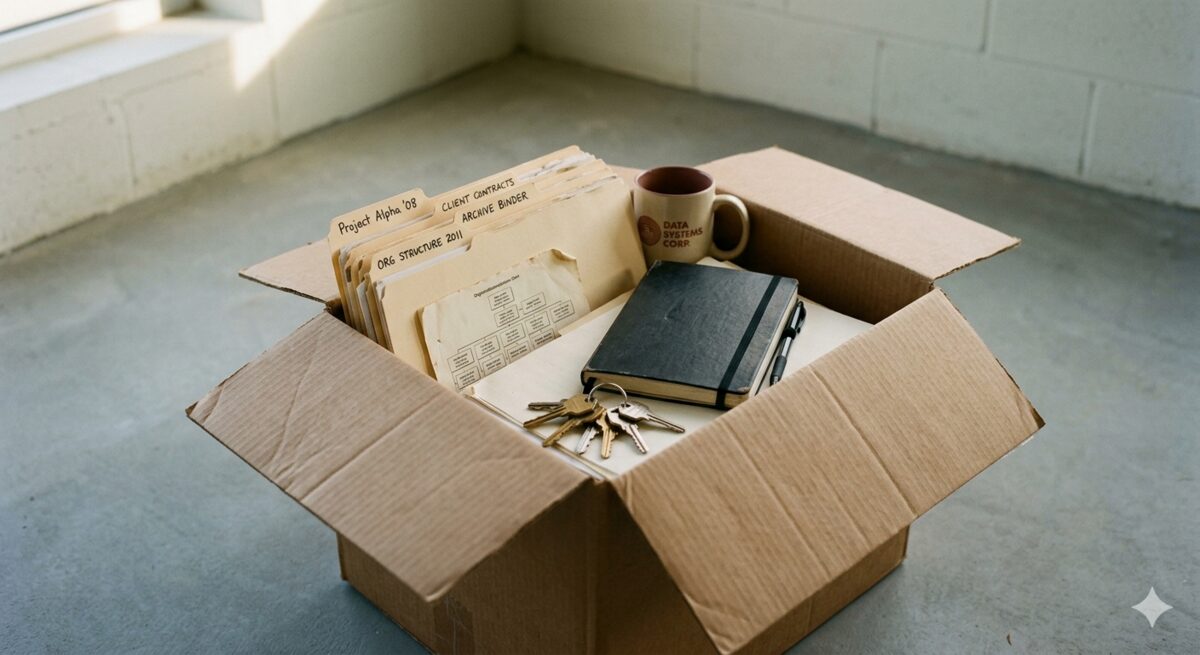

When I left Aware, a health-tech startup in Berlin, I faced a problem I'd seen play out badly at every company I'd worked at before. All the knowledge about how the People function actually ran existed nowhere except in my head.

This is normal for startups. Nobody writes process documentation at a startup. There's always something more urgent.

You figure out how German parental leave law works when someone gets pregnant. You learn the payroll cycle by running it wrong once. You develop a severance negotiation approach through trial and error over several years. And then one day you leave — and all of that walks out the door with you.

The standard handover is a couple of calls and a half-finished Google Doc. I know because I've been on the receiving end. It's not malice. The departing person genuinely wants to help.

But "document everything you know" is a paralysing brief. You don't know where to start. You can't tell how much detail is enough. What feels obvious to you won't be obvious to your successor.

I had maybe two weeks. And I had a hypothesis: that AI could transform the handover process from "expert tries to write everything down" to "expert has structured conversations while AI does the documentation."

The Approach

I started with structure, not content. Before documenting anything, I spent about an hour with AI building a table of contents — 13 chapters covering the full scope of the People role. Legal entities, hiring, contracts, payroll, onboarding, benefits, leave, terminations, immigration, compliance, tools, and open items. This gave me a framework to fill rather than a blank page to stare at.

That distinction matters. A blank page asks "what do you know?" — which is overwhelming. A chapter structure asks "what do you know about this?" — which is answerable.

I uploaded this framework to the AI and asked for a critique. What came back was a detailed gap analysis: what was missing from each chapter, what needed more depth, what I hadn't thought of. This was useful on its own — it showed me the shape of the problem before I started solving it.

Then I proposed the approach that ended up working: an AI agent that would interview me, chapter by chapter, and synthesise my answers into structured documentation in real time. I'd answer questions conversationally. The agent would turn those answers into professional prose, organised logically, with consistent formatting across all chapters.

Small Batches

This was the single most important tactical discovery. Early on, the agent was asking five or six questions at a time. I pushed back — too many. We settled on two or three questions per round.

This number wasn't arbitrary. Two or three questions sit comfortably in working memory. You can think about all of them at once without writing anything down.

But the small batch size did more than reduce cognitive load. It gave me quality control.

After each round of answers, I could see exactly how the AI had processed my input. I could catch misunderstandings immediately rather than discovering them buried in a 20-page document later. When the AI misinterpreted something — like inventing a cost-saving severance structure I'd never actually described — I caught it in the next exchange and corrected it.

Small batches also reduced hallucination risk. The more data you feed an AI in one go, and the more you ask it to produce at once, the higher the chance of fabrication. By working in tight loops — a few questions, a few answers, a short synthesis — each cycle was simple enough that the AI could handle it accurately.

What the Agent Did

The AI's job was to be an intelligent documentarian. It asked structured questions that surfaced knowledge I wouldn't have thought to write down.

It transformed conversational answers — "yeah so basically we just email the export and she processes it" — into operational documentation: "Generate payroll export from Personio, upload to NetFiles, and notify the external payroll provider that the file is ready for processing." It maintained consistent structure and formatting across all 13 chapters. And it identified gaps — moments where my answer implied a process existed but I hadn't described it, or where two chapters referenced the same thing differently.

What it didn't do was invent anything. The agent never created processes I hadn't described, added best practices I hadn't confirmed, or filled gaps with assumptions. When information was missing, it flagged the gap explicitly.

This boundary is critical. The moment an AI starts generating plausible-sounding content that doesn't come from the expert, the documentation becomes unreliable. And unreliable handover documentation is worse than no documentation — it creates false confidence.

The Result

The end result was 13 chapters totalling roughly 10,000 words — covering the entire People function from legal entity structure through hiring, contracts, payroll, onboarding, benefits, leave management, terminations, immigration, compliance, and tools. Circa 15 hours of total human effort. Elapsed time: two weeks from start to a reviewed, validated handover package.

For context: writing it all from scratch would have taken 40 to 60 hours. More realistically, it wouldn't have happened at all.

At the end of the process, the external HR agency taking over the function told me explicitly that this was the best and most detailed handover they had ever received from a client. These are people who take over HR functions professionally. They've seen a lot of handovers. That feedback matters more to me than any self-assessment.

Who This Is For

This approach works best when three conditions are met: someone with significant institutional knowledge is leaving, there's a time constraint that makes traditional documentation impractical, and the departing person is willing to talk through what they know even if they wouldn't sit down and write it.

That covers a lot of ground. Head of People leaving a startup. Finance lead handing over month-end close. Operations manager who built the logistics function from scratch. Product leader who holds the roadmap context and stakeholder relationships.

The domain doesn't matter — the pattern is the same. Someone built something, learned how it works through experience, and needs to transfer that knowledge before they go.

Want to know more? There's a detailed case study covering the full methodology, workflow, output examples, time investment, validation process, and what I'd do differently next time. If you'd like a copy, or if you're facing a similar knowledge transfer challenge, get in touch and I'd be happy to share it.